More Kappa data from DSM-5 field trials

May 9, 2012

More Kappa data from DSM-5 field trials

Post #167 Shortlink: http://wp.me/pKrrB-27D

Further data from the DSM-5 field trials results have been released in a report by Deborah Brauser for Medscape Medical News.

You can read Ms Brauser’s report from the American Psychiatric Association’s annual conference here, though you may need to register for the site:

Medscape Medical News > Psychiatry

DSM-5 Field Trials Generate Mixed Results

Deborah Brauser | May 8, 2012

…Members of the task force said they hope to publish the full results “within a month.” However, the third and final public comment period for the manual opened last week and ends on June 15. Although the entire period is 6 weeks long, the public may only have 2 weeks to comment after the publication of the field trials’ findings.

…“No previous field trial had such a sophisticated design. And it has resulted in more statistically significant data for specific disorders,” said Dr. Regier.

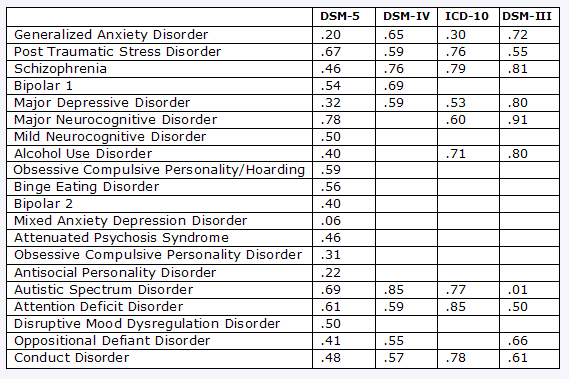

The current DSM-5 field trials, as well as field trials for past manuals, use Kappa score as a statistical measure of criteria reliability. A Kappa score of 1.0 was considered perfect, a score of greater than .8 was considered almost perfect, a score of .6 to .8 was considered good to very good, a score of .4 to .6 was considered moderate, a score of .2 to .4 was considered fair and could be accepted, and a score of less than .2 was considered poor.

At adult sites, schizophrenia was shown to have a pooled Kappa score of .46. However, that is down from the .76 and .81 Kappa scores found in the DSM-IV and DSM-III, respectively, and it is less than the .79 score found in the International Classification of Diseases, Tenth Revision (ICD-10).

“It’s important to realize in some ways that the Kappa in the current field trial was from a totally different design…,” said Dr. Regier

This table has some of the results:

Reconstructed from data published by A Frances, DSM 5 in Distress, Psychology Today, 05.06.12

1 Boring Old Man has updated an earlier table here on his blog which incorporates additional data from the Medscape report:

updated table

1 Boring Old Man | May 9, 2012There are further, detailed commentaries from 1 boring old man on the DSM-5 field trial results and Kappa values here:

major depressive disorder κ=0.30?… May 6, 2012

a fork in the road… May 7, 2012

Village Consumed by Deadly Storm… May 8, 2012

box scores and kappa… May 8, 2012

Included in Ms Brauser’s report are data for “Complex somatic disorder”:

The field trials for the new proposed category Complex Somatic Symptom Disorder (CSSD) were held at Mayo. According to one of several tables within Ms Brauser’s report, the following data have been released for “Complex somatic disorder” [sic]:

Extract from DSM-5 Field Trials Generate Mixed Results, Deborah Brauser, May 8, 2012

Disorder DSM-5 (95% CI) DSM-IV ICD-10 DSM-III Major neurocognitive disorder .78 (.68 – .87) — .66 .91 ASD .69 (.58 – .80) .59 – .85 .77 -.01 PTSD .67 (.59 – .74) .59 .76 .55* Child ADHD .61 (.51 – .72) .59 .85 .50 Complex somatic disorder .60 (.41 – .78) — .45 .42 CI, confidence interval; ASD, autism spectrum disorder; PTSD, posttraumatic stress disorder; ADHD, attention-deficit/hyperactivity disorder

*From the DSM-III-R.

CSSD is a new category for DSM-5 which redefines and replaces some, but not all of the existing DSM-IVSomatoform Disorders categories under a new rubric with a new definition and criteria.

It’s a mashup of the existing categories:

Somatization Disorder

Hypochondriasis

Undifferentiated Somatoform Disorder

Pain Disorder

Following evaluation of the field trials, this new category, Complex Somatic Symptom Disorder is now proposed to drop the “Complex” descriptor, be named Somatic Symptom Disorder and absorb Simple Somatic Symptom Disorder (SSSD) – a separate diagnosis that had been introduced for the second draft, with criteria requiring fewer symptoms than for a diagnosis of CSSD and shorter chronicity.

In order to accommodate SSSD, criteria and Severity Specifiers for CSSD have been modified since the second draft. (More on this in the next post.)

Since CSSS (or SSD, as is now proposed) did not exist as a category in DSM-IV, or in ICD-10 or DSM-III, it’s unclear and unexplained by the table what data for which existing somatoform disorders have been used for Kappa comparison for this new category with data for ICD-10 and DSM-III, and how meaningful comparison between them would be.

You can find out more about how the field trials were conducted on the DSM-5 Development site.

Delay in publication of field trial results and no key documents in support of proposals

Stakeholders may not get to scrutinise a report on the field trials until as late as a couple of weeks before the public comment period closes.

There are no Disorder Descriptions and Rationale/Validity Propositions PDF documents that expand on category descriptions and rationales (at least not for the Somatic Symptom Disorders) and reflect revisions to proposals between the release of the second and third draft.

Yesterday, I contacted APA’s Communications and Media Office to enquire whether the Somatic Symptom Disorders work group intends to publish either a Disorder Descriptions or Rationale/Validity Propositions document, or both, to accompany this latest draft during the life of the stakeholder review period or whether these key documents are being dispensed with for the third draft.

I’ll update if and when APA Media and Communications provides clarification.

Related post:

Make Yourself Heard! says DSM-5’s Kupfer – but are they listening?